Environment Setup

- Enabling UCC in OpenMPI

- Enabling NCCL in UCC (Team Layer Selection)

- All The Variables

Results

- 1. Plain OpenMPI

- 2. OpenMPI with UCC

- 3. OpenMPI with UCC+NCCL

Scaling Plots

- Average Latency

- Bus Bandwidth

- Comparing MPI, UCC, UCC+NCCL

- Comparing UCC+NCCL, NCCL

Summary

Technical Details

This post showcases how to use the highly optimized, GPU-based collectives of Nvidia’s (NCCL library) by using it as a UCC backend through MPI. Further down, we show performance comparisons in form of graphs.

UCC (Unified Collective Communication) is a collective communication operations API and library developed under the umbrella of the UCX collaboration. UCC provides various highly-scalable and performant collectives for HPC, AI/ML, and IO workloads; together with non-blocking collective operations for MPI and OpenSHMEM models.

In the following, we take a traditional allreduce MPI example code and accelerate it by using UCC with NCCL through the correct environment variables. The best thing: The original MPI program stays untouched; no single NCCL or UCC function is used directly.

Environment Setup

To setup the MPI → UCC → NCCL stack, the right environment variables need to be set. Let’s go through them using the OpenMPI implementation.

Enabling UCC in OpenMPI

First, to enable UCC in OpenMPI, two important environment variables need to be set:

OMPI_MCA_coll_ucc_enable=1: Sets the MCA component for MPI to use UCC-accelerated collectives. Can be disabled by setting OMPI_MCA_coll_ucc_enable=0.OMPI_MCA_coll_ucc_priority=100: Is used to give priority for certain collective-implementing component. Currently, we must give UCC the highest priority (100), so that UCC is selected as the collective library over the other default collective libraries.

Now, on to selecting our desired NCCL backend within UCC!

Enabling NCCL in UCC (Team Layer Selection)

Different backends are supported in UCC as Team Layers (TLs). Beyond NCCL, also RCCL, SHARP, and basic UCX transports are supported. The TLs are thin, composable abstractions that are independent from each other and are further built up of Collective Layers (CLs).

Generally, the internal heuristics of UCC aim to always select the best-performing algorithm, given available Team and Collective Layers. However, in some cases these heuristics are not making the optimal choice, or one wants to select a certain TL for debugging or experimentation. Then in order to select a specific TL, an according environment variable has to be set, like:

UCC_<TL/CL>_<NAME>_TUNE=token1#token2...#tokenN

with # delimiting a list of tokens. A token has the following structure:

token=coll_type:msg_range:mem_type:team_size:score:alg

where : is the delimiter for a list of qualifiers. Each token must contain either the score or alg qualifier; the other qualifiers are optional. The score determines which algorithm of a certain backend is picked. See also the UCC Wiki FAQ for a little more insight.

In order to use the NCCL backend for allreduce operations on device-side CUDA buffers (cuda) for all message sizes, and give them the highest priority (inf), we need to set:

UCC_TL_NCCL_TUNE=allreduce:cuda:inf

Using the NCCL TL with UCC has some restrictions; to be specific Multi-Process Service (MPS) is not supported i.e. only 1 MPI rank per GPU is supported.

All The Variables

To sum up, the following variables need to be set to enable NCCL collectives used by UCC from OpenMPI:

OMPI_MCA_coll_ucc_enable=1

OMPI_MCA_coll_ucc_priority=100

UCC_TL_NCCL_TUNE=allreduce:cuda:inf

For more insights and detailed explanations, check out the UCC user guide.

Results

To showcase the performance of the different approaches, we use the allreduce latency test of the OSU Micro-Benchmarks (OSU). We give -d cuda as a runtime option in order to use device-side buffers.

We use NCCL v2.15.1 and UCC v1.1.0 on our JUWELS Booster system with Nvidia A100 GPUs (40 GB). 8 tasks are used, with one per GPU – i.e. two nodes in total

In order to see the potential of UCC+NCCL (UCC with the NCCL backend enabled) over sole UCC, we use message sizes of the order of 1 GB; otherwise, the startup overheads of UCC+NCCL will impact the results.

1. Plain OpenMPI

OMPI_MCA_coll_ucc_enable=0 \

srun ./osu_allreduce -d cuda -m 1000000000:1000000000 --mem-limit 1048576000

# OSU MPI-CUDA Allreduce Latency Test v7.0

# Size Avg Latency(us)

1000000000 1183590.64

2. OpenMPI with UCC

⚠️ Make sure that the NCCL is not available in the environment (module unload)! Otherwise, the UCC heuristics will select the NCCL TL.

OMPI_MCA_coll_ucc_enable=1 OMPI_MCA_coll_ucc_priority=100 \

srun ./osu_allreduce -d cuda -m 1000000000:1000000000 --mem-limit 1048576000

# OSU MPI-CUDA Allreduce Latency Test v7.0

# Size Avg Latency(us)

1000000000 23284.48

3. OpenMPI with UCC+NCCL

OMPI_MCA_coll_ucc_enable=1 OMPI_MCA_coll_ucc_priority=100 \

UCC_TL_NCCL_TUNE=allreduce:cuda:inf \

srun ./osu_allreduce -d cuda -m 1000000000:1000000000 --mem-limit 1048576000

# OSU MPI-CUDA Allreduce Latency Test v7.0

# Size Avg Latency(us)

1000000000 18230.13

For this latency, lower is better. Using NCCL with UCC (third variant) gives us the best result and is 65 times faster than the plain OpenMPI version.

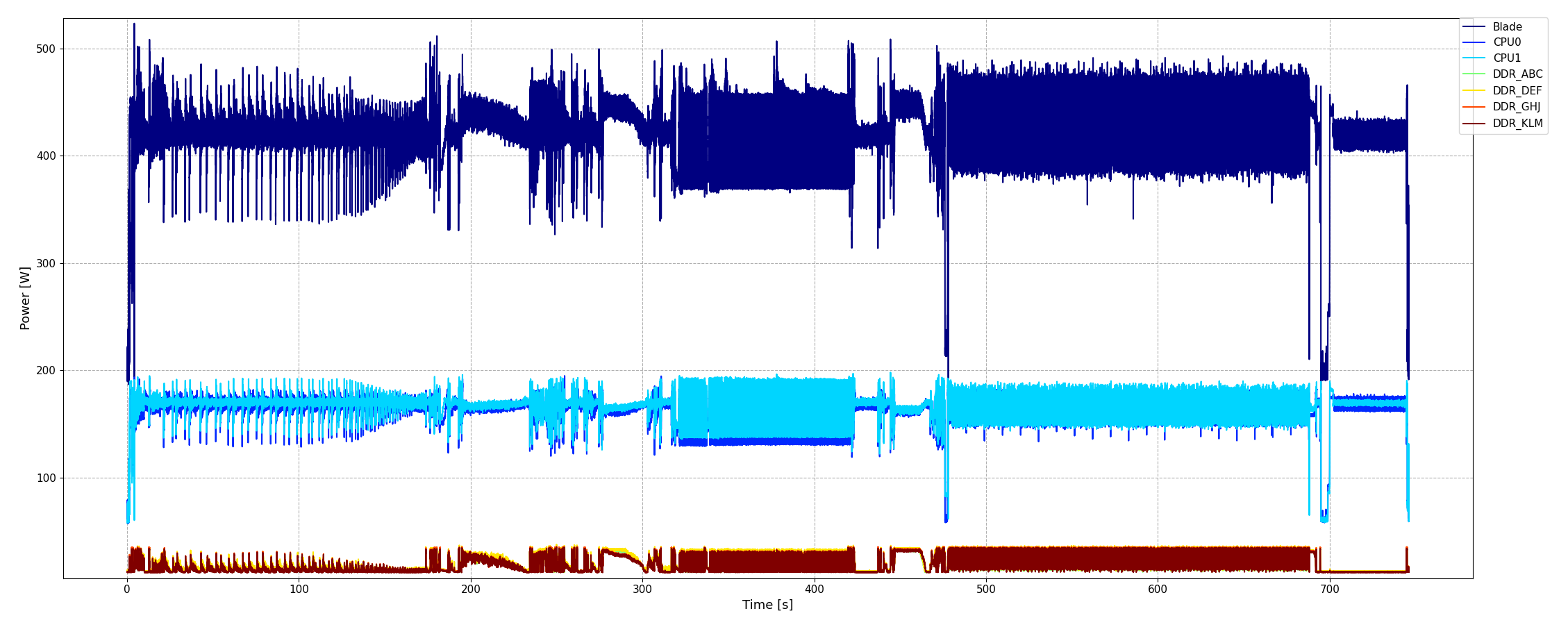

Scaling Plots

To investigate the scaling behavior, we run the three variants on a number of GPUs: 2, 4, 8, 12, 16 GPUs.

⚠️ Remember: Each JUWELS Booster node has 4 GPUs.

All runs in the scope of this investigation were confined to one DragonFly group, to limit influences of network traffic. Nevertheless, similar performance behaviour can be seen when using scattered nodes as well.

Average Latency

The graph shows average latency plotted over the number of GPUs, annotated with the number of nodes, number of tasks launched per node, and average latency values. Again, lower is better.

The order-of-magnitude improvement of any UCC-enabled execution over plain OpenMPI is consistent over the scope of tested number of GPUs. UCC+NCCL improves significantly over sole UCC for multi-node setups. Note that two configurations to use four GPUs are plotted, once using four GPUs in one node (lower points) and once using two GPUs each in two nodes (upper points).

The best latency for the largest setup of 16 GPUs is achieved from UCC+NCCL with only 20 ms GPUs.

Bus Bandwidth

It is also possible to calculate bandwidths associated to the operation. The naïve algorithmic bandwidth, \(B_\text{alg} = S/t\) (with data size \(S\) and execution time \(t\)), can be extended to the bus bandwidth \(B_\text{bus}\), which is independent of the number of ranks \(n\) and is defined per NCCL documentation to the following:

\begin{equation}

B_\text{bus} =

B_\text{alg} \times \frac{2(n − 1)}{n}

\end{equation}

It is an attempt to show the impact of the hardware bottleneck, i.e. NVLink, PCIe, and InfiniBand, in our case.

Comparing MPI, UCC, UCC+NCCL

When all four GPUs in a node are used (4 GPUs [nodes=1, taskspernode=4]), the theoretical peak unidirectional bandwidth for A100’s NVLink3 is 300 GB/s, and when only two GPUs in a node are used (2 GPUs [nodes=1, taskspernode=2]) the peak unidirectional bandwidth is 100 GB/s.

In the case of inter-node communications, InfiniBand HDR200 offers 200 Gbit/s per adapter. When using all four adapters for communication (GPUs > 4 and nodes > 1), the peak bandwidth is 4×200 Gbit/s = 100 GB/s; and half of it (50 GB/s) when using only two adapters are used (4 GPUs [nodes=2, taskspernode=2]).

All of this aids from the capacity of NCCL to utilize indirect connections between devices, leading to the use of more resources. Realistically achievable bandwidths are lower, of course.

The graph shows the bandwidth plotted over number of GPUs, annotated with number of nodes, number of tasks launched per node, and the bandwidth value. Again, with the NCCL backend of UCC we are able to come close to theoretical peak bandwidth, while the plain OpenMPI runs are more than an order of maximum lower.

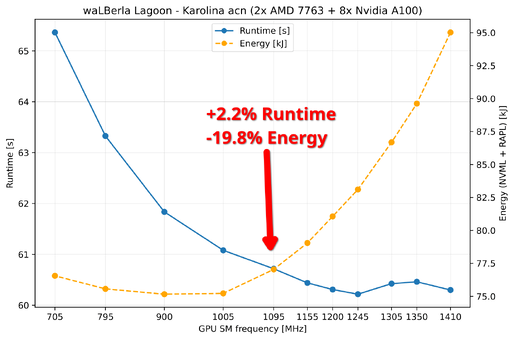

Comparing UCC+NCCL, NCCL

Finally, we can also compare the allreduce bandwidth between UCC+NCCL and sole NCCL, using the NCCL Tests in addition to the OSU benchmarks. As before, the graph plots average bus bandwidth over number of GPUs for a 1 GB message size. The right y axis shows the relative difference between OpenMPI with UCC+NCCL and direct NCCL. One can see that the overhead introduced by UCC is minimal; for multi-node runs it is about 0.5%.

Furthermore, OSU Micro-Benchmarks have also added support to benchmark NCCL directly which is also plotted in the graph.

Summary

Enabling NCCL as a backend to UCC, and using UCC from OpenMPI is very easy and only a matter of setting two (if UCC heuristics select the desired TL) or three environment variables – no code changes necessary. Performance gains are significant, like 65× in our example configurations shown above. Of course, a proper setup is needed.

Acknowledgements: Thanks go out to Jiri Kraus (NVIDIA) for being available for a multitude of questions arising during writing of this article. Thanks, Jiri!

Technical Details

- Build UCC

- Modules used for the results, all built according to our published EasyBuild infrastructure:

- NCCL/2.15.1

- Cuda/11.7

- OpenMPI/4.1.4

- GCC/11.3.0

- UCX/1.13.1

- UCC/1.1.0

- Building OSU:

>> cd osu-micro-benchmarks-7.0.1

>> ./configure CC=<path to mpicc> CXX=<path to mpicxx> --enable-cuda –with-cuda-include=<path to CUDA include> \--with-cuda-libpath=<path to cuda lib > --prefix=$(pwd)

>> make -j 24; make install

- Sample Script to run on JUWELS Booster

#!/usr/bin/env bash

ml purge

ml load Stages/2023

ml load GCCcore/.11.3.0 UCX UCC GCC/11.3.0 OpenMPI/4.1.4

ml load NCCL/default-CUDA-11.7

ml list

export OMPI_MCA_coll_ucc_enable=1

export OMPI_MCA_coll_ucc_priority=100

export UCC_TL_NCCL_TUNE=allreduce:cuda:inf

export OMPI_MCA_coll_ucc_verbose=3

export UCC_LOG_LEVEL=INFO

NODES=2

TASKSPERNODE=4

GPU=8

srun --jobid $SLURM_JOB_ID --nodes=${NODES} --ntasks-per-node=${TASKSPERNODE} --gres=gpu:4 \

./osu-micro-benchmarks-7.0.1/c/mpi/collective/osu_allreduce -d cuda -m 1000000000:1000000000 --mem-limit 1048576000 > "OSU_NODE${NODES}_GPU${GPU}_UCCNCCL_${SLURM_JOB_ID}.txt" 2>&1

]]>

Together with our colleagues from Helmholtz-Zentrum Dresden-Rossendorf (HZDR) and in collaboration with HIDA and OpenHackathons, we hosted the Helmholtz GPU Hackathon 2023 in Jülich in May.

Together with our colleagues from Helmholtz-Zentrum Dresden-Rossendorf (HZDR) and in collaboration with HIDA and OpenHackathons, we hosted the Helmholtz GPU Hackathon 2023 in Jülich in May.